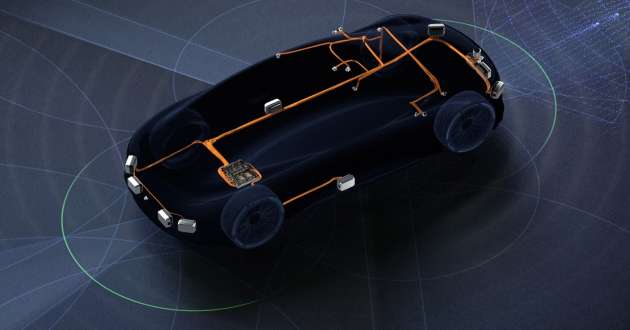

Nvidia has unveiled the Drive Hyperion 8 platform at this year’s GPU Technology Conference (GTC), which will be made available to automakers and other OEMs for 2024 vehicle models. Since 2015, the technology company has been supplying computer platforms to companies that allow for autonomous and driver assistance features powered by deep learning.

The latest Hyperion 8 represents another step forward for the company, as it is an all-in-one solution developed specifically for full self-driving systems. While previous Nvidia offerings only come with a computer architecture, the new platform also includes a sensor set sourced from leading suppliers like Continental, Hella, Luminar, Sony and Valeo.

According to Nvidia, the production platform is designed to be open and modular, allowing customers to choose what they need — from core compute and middleware all the way to NCAP, level 3 driving, level 4 parking and AI cockpit capabilities.

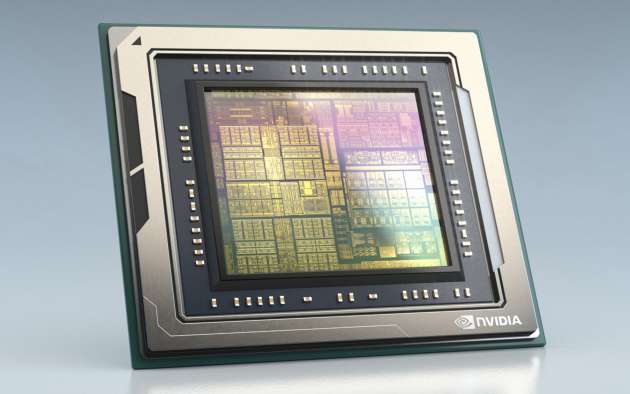

At its core, Hyperion 8 is powered by two Drive Orin systems-on-a-chip (SoC) to provide redundancy and fail-over safety, the latter being that it meets systematic safety standards such as ISO 26262 ASIL-D. According to Nvidia, Lotus, QCraft, Human Horizons and WM Motor have already chosen Drive Orin for their future vehicles, joining others such as Mercedes-Benz, Volvo, Nio and VinFast just to name a few.

The Drive Orin SoC features GPUs built on the company’s 7 nm Ampere architecture and provide the computing power (254 trillion operations per second) necessary for autonomous functions and deep neural networks. With scalability, the platform can be upgraded to the newer (and upcoming) Drive Atlan SoC in the future, keeping it relevant for companies that adopt it.

In its current form, Hyperion 8 comes with the DriveWorks Sensor Abstraction Layer streamlining sensor setup with easy-to-use plugins. Nvidia says the platform has 12 cameras, nine radars, 12 ultrasonics and a front-facing lidar from the aforementioned partners, although the openness and flexibility of the ecosystem allows vehicle manufacturers to personalise the platform to meet their requirements, supported by a comprehensive toolset.

This includes the Omniverse Replicator for Nvidia’s Drive Sim simulation platform, which is an engine for generating synthetic data with ground truth for training AI networks. Put simply, Omniverse Replicator aims to bridge the gap between simulated and real-life situations by generating scenarios with high fidelity and realism.

Most deep neural networks powering an autonomous vehicle’s perception are composed of two parts, including an algorithmic model as well as the data used to train that model. Engineers dedicated a lot of time to refine the first, but the data side is sometimes lacking due to the limitations of real-world data, which is incomplete and time consuming and costly to collect.

By augmenting real-world data collection with synthetic data generated within the Omniverse Replicator, engineers can rapidly manipulate scenes in a detailed sandbox of sorts, repeating them as many times as needed. This helps to speed up autonomous vehicle development, while ensuring they are safer and more efficient proper to large-scale deployment.

Mapping is also a fundamental pillar for self-driving, so Nvidia also introduced Drive Mapping, which combines its Drive ecosystem with DeepMap technology. Map data is collected through specific data collection cars or vehicles running Hyperion 8, which is fed into the Drive AGX AI compute platform to build and update maps in real time, creating a scalable solution for autonomous driving globally.

In addition to these new technologies, Nvidia also showcased its Drive Concierge and Drive Chauffeur software platforms integrated into an S-Class with Hyperion 8. These allow for hands-free driving from address to address, with self-parking also available upon arrival.

Adding to these systems are Drive IX and the Omniverse Avatar, the latter featuring speech AI, computer vision, natural language understanding, recommendation engines and simulation to allow users to have real-time conversations with an AI depicted as an avatar on the vehicle’s infotainment, while also issuing commands without requiring physical controls or touchscreens.

Looking to sell your car? Sell it with Carro.