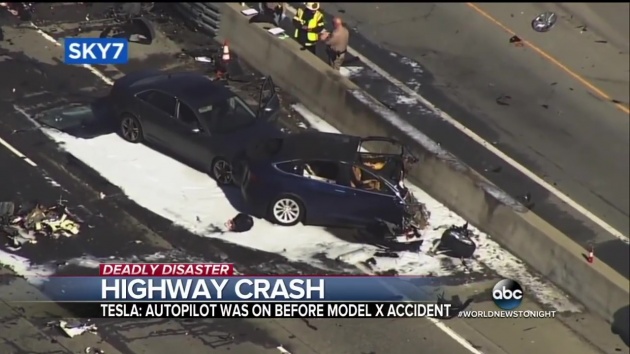

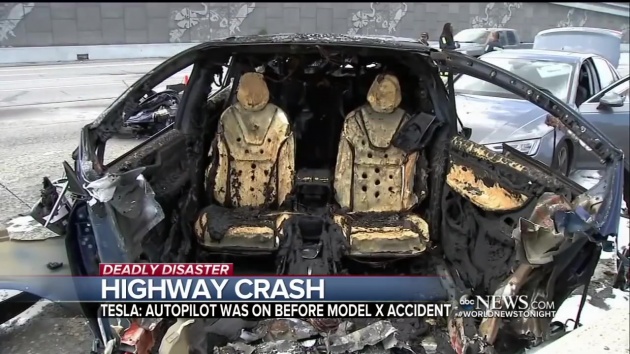

On March 23, 2018, 38-year-old Walter Huang was killed when his Tesla Model X crashed into a freeway divider on Highway 101 near Mountain View, California and proceeded to catch fire – the vehicle’s Autopilot system was engaged at the time.

Since then, Tesla has released two statements (March 27 and 30) to shed more light on the incident, with information extracted from the vehicle’s computer logs. According to the carmaker, the Autopilot system was indeed engaged, but the driver’s hands were not detected on the steering wheel for six seconds prior to the collision.

It added that the system had actively provided several visual and one audible hands-on warnings earlier in the drive, but these were not heeded. Furthermore, it noted the driver had about five seconds and 150 metres of unobstructed view of the concrete divider, but zero action was taken to prevent the crash.

Tesla also said the severe nature of the crash was because the crash attenuator, a highway safety barrier which is designed to reduce the impact into a concrete lane divider, had been crushed in a prior accident without being replaced. It said it had “never seen this level of damage to a Model X in any other crash.”

To reassure the public that the Autopilot system is safe, Tesla said owners have driven pass the same stretch of highway where the incident took place with the system active without incident – about 85,000 times since Autopilot was first rolled out in 2015 and roughly 20,000 times since the beginning of the year. It stated “there are over 200 successful Autopilot trips per day on this exact stretch of road.”

While the information released may be pretty comprehensive, the U.S. National Transport Safety Board (NTSB), which are the government agency responsible for investigations are not pleased about Tesla’s decision to do so.

According to a report by The Washington Post, rules governing investigations state “party participants or their respective organisations must refrain from providing opinions or analysis of the accident outside of the participants in the investigation. Failure to abide by these requirements may lead to removal of a party from the investigation.”

Tesla CEO Elon Musk addressed NTSB’s concerns in a tweet on April 3 recently, saying, “lot of respect for NTSB, but NHTSA (National Highway Traffic Safety Administration) regulates cars, not NTSB, which is an advisory body. Tesla releases critical crash data affecting public safety immediately and always will. To do otherwise would be unsafe.”

The incident has opened up more questions on how to approach the issue of self-driving cars, in the wake of this month’s fatal Uber crash involving a pedestrian and an autonomous car. The death of a Huang is the third involving the Autopilot system, with other cases being Gao Yaning in China and Joshua Brown in Florida. In both cases, the drivers’ Model S had their systems engaged at the time.

Lot of respect for NTSB, but NHTSA regulates cars, not NTSB, which is an advisory body. Tesla releases critical crash data affecting public safety immediately & always will. To do otherwise would be unsafe.

— Elon Musk (@elonmusk) 2 April 2018

Looking to sell your car? Sell it with Carro.

Tesla > all

1 thing for sure, if Teslas were to be driven on Msian roads, the autopilot would go haywire.

Wait a minute I thought if there was no intervention from the driver, wouldn’t the vehicle come to a slow stop? What happened to that?

They mention the previous accident on the divider have make it unrecognisable by the Autopilot hence death. And before the accident 5-6sec the driver was completely not holding the sterling wheel and have 150meter distance for him to react but I guess he was either sleeping or playing with his phone, hence the unnecessary accident happened.

If the system cannot detect ever changing environ, then it has no place being outside on public roads. What happens if theres a car stalled on the side with a famili?

Aren’t the purpose of the auto pilot is so that drivers do not have to control the steering? If they have to control to steering, it’s called autocruise not auto pilot… lol

Tesle is not yet Level 5 autonomy.

Lolz! Another ini salah driver excuse. Teslas system has been proven to be buggy as hell yet money solves everything, and they got loads of it (for now).

They shud just limit Autopilot to 60kmph or totally disable it until better system is retrofitted. No excuses when the crash happens on a straight hiway.

It just mean that autopilot system is not mature yet. i thought having autopilot i can read or nap in car.

Yup…we so use to our myvees…tht we can multitask like crazy…while doing 180…cool right…tesla fellow should consult us 1st..

IMHO, this is irresponsible on Tesla’s part. If Tesla requires the driver to agree to keep their hands on the wheel at all times before they can use Autopilot, then Tesla should assume that the driver is incapacitated and stop the vehicle at a safe spot when the Autopilot system detects that the hands are off the steering wheel for extended period of time.

It will after a certain time if the driver didn’t respond. You can view some videos on youtube when the autopilot does it. But just 6 seconds not responding will not disable autopilot yet

OMG, that is a US80,000 car. It is hardly recognizable after it burnt. How did the car burn in the 1st place ??

Lithium ion batteries are notoriously flammable (note 7, mh 370). Once one cell catches fire, it will cause the next cell to catch fire as well and it can’t be put out easily.

Jeremy clarkson also highlighted this when he test drove the model X.

U mean the U.S. National Transport Safety Board (NTSB) did not now that the Lithium ion batteries will explode on impact !

You’re riding on top of flammable battery. Impact, leaks, short circuits, that’s reason enough for the car to bust flame.

of course ntsb know. conventional cars also can catch fire and burn during crash. so whats your point again ?

Tesla have never claimed that thier system is fully autonomous. In fact they even did a press release stating so. Still, stubborn owners keep pushing the limits and leaving their lives to be in the hands of a computer.

Honestly I’m surprised there are only so few crashes…

Even if able to disable at last 6 second will not save the car crash!

Typical elon musk. Cover up all of his failure and blame others if he must.